This week Macro Mice gave the last presentation on our project, the Final Design Review. While we are happy with the results of our project, we are sad to leave it.

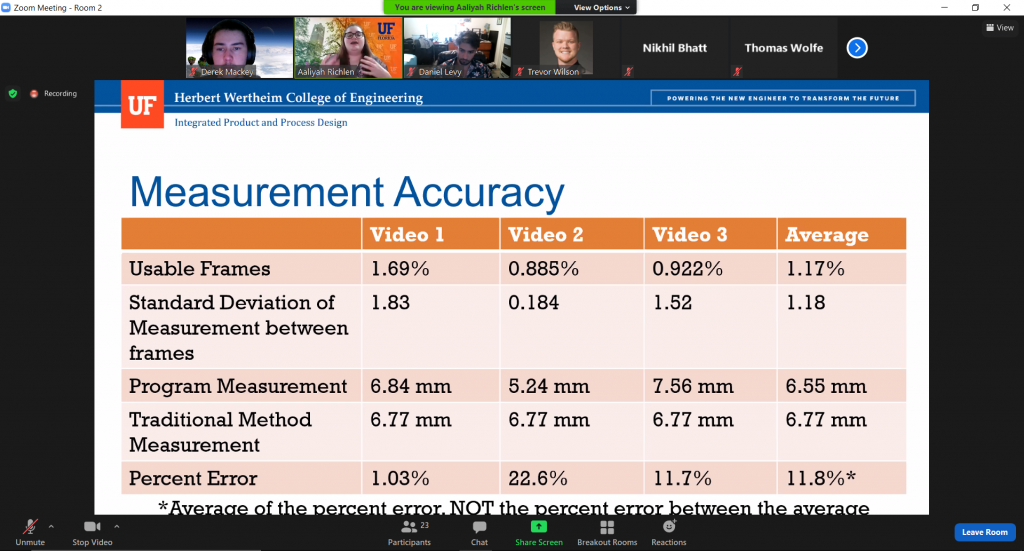

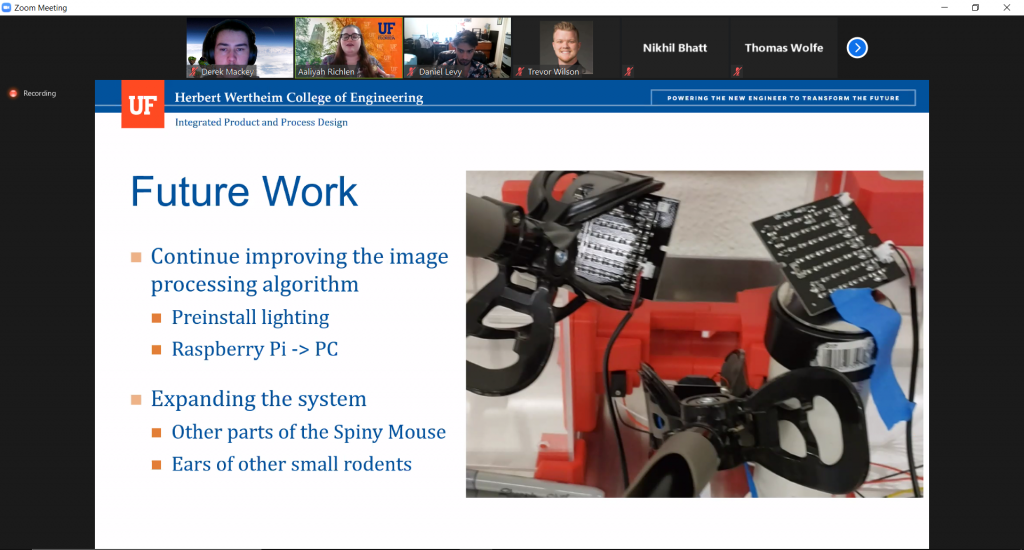

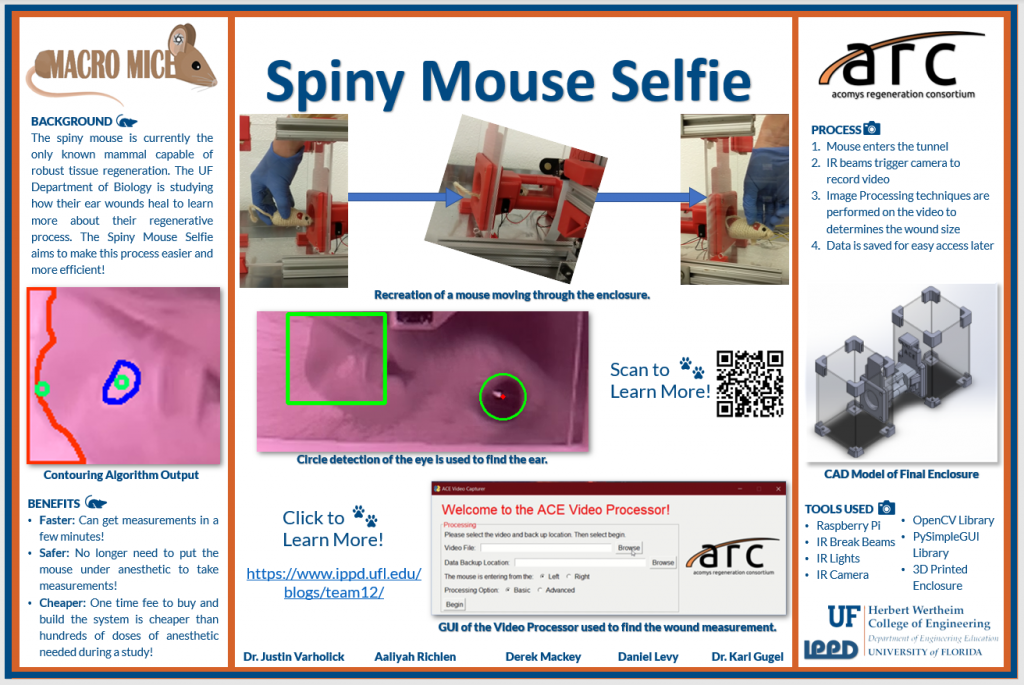

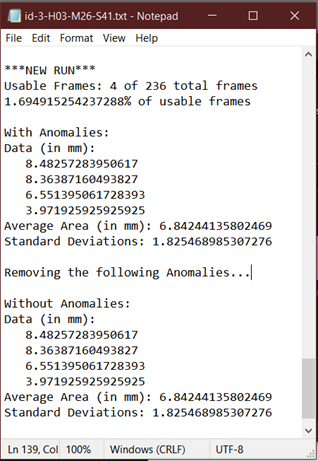

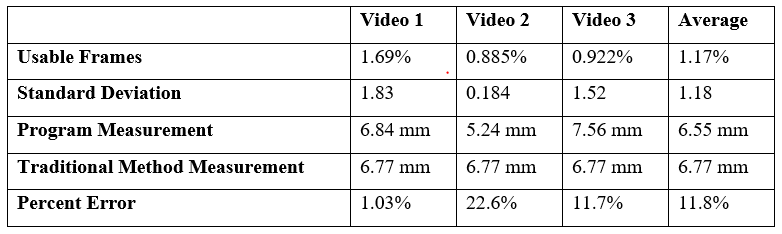

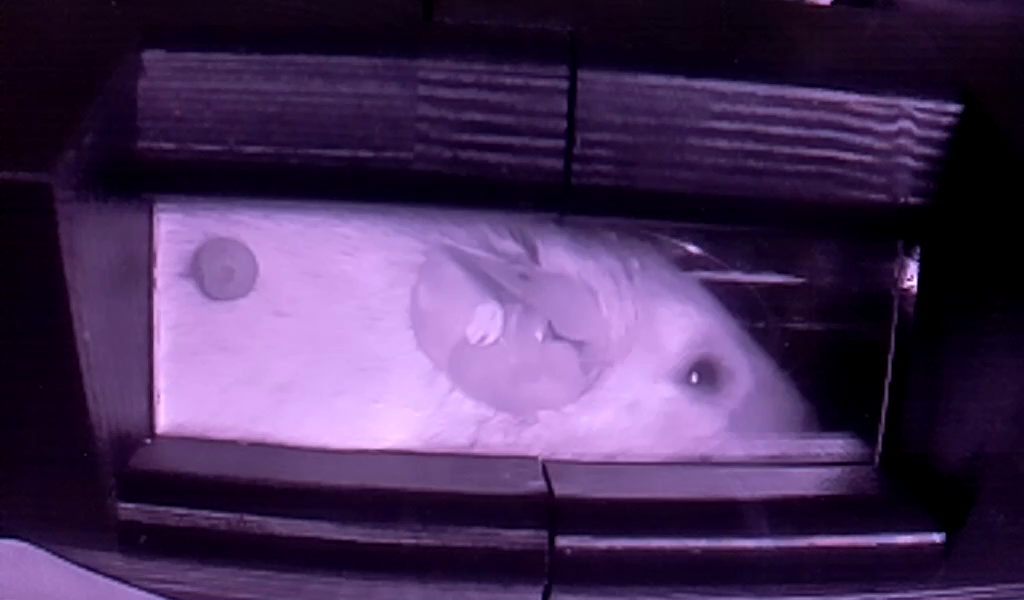

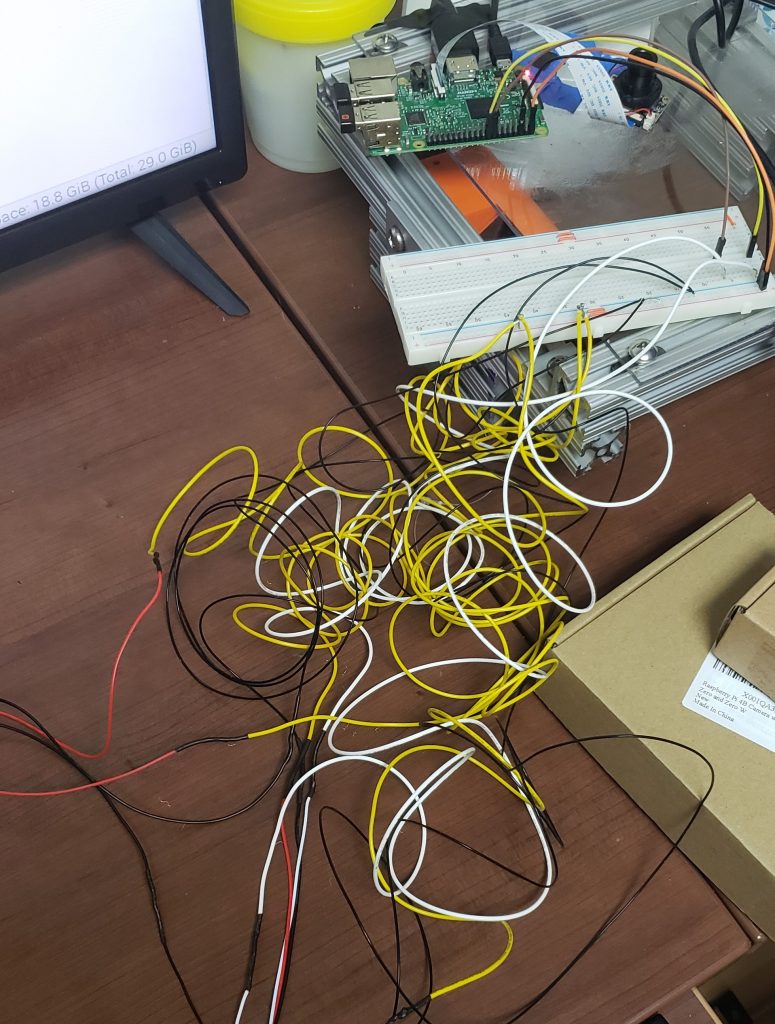

While Macro Mice plans on continuing improving the prototype into the summer and fall, this event marked the end of our time at IPPD. In our final presentation, the team presented how our prototype works, a demo of the prototype, our results (including Technical Performance Measures that were met and unmet), and the future goals of the team. In addition to this, in our final report, we went into more detail how the prototype works, how to set it up and use it, a list of materials, and the prototype’s results. We would like to highlight that the main goal of the project was to get a percent error measurement accuracy (when compared to the traditional method of measurement) of less than 25% and we achieved this in the end with a percent error of 11.8%. However, we believe that is can be improved, and the plan of action table on the next page documents some of the ways we plan to attack this. This includes creating permanent mounts for the camera and lighting equipment and improving the image processing algorithm itself. While we are finishing our time with IPPD officially next week, we hope to continue to use lab space and equipment to do this. We will draft a plan for IPPD admin, so they understand what we hope to do.

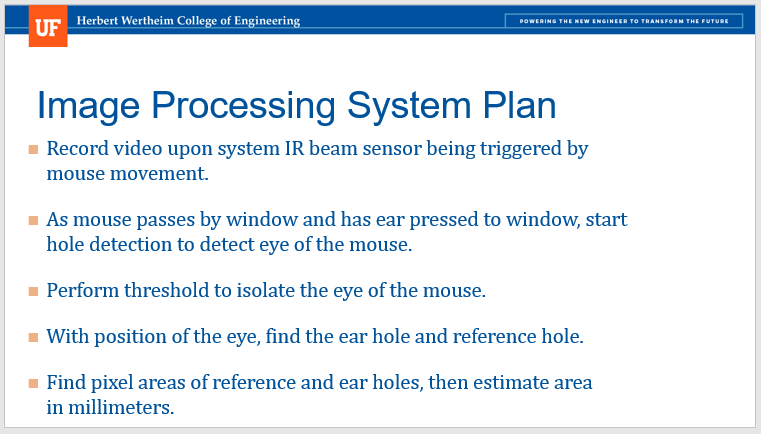

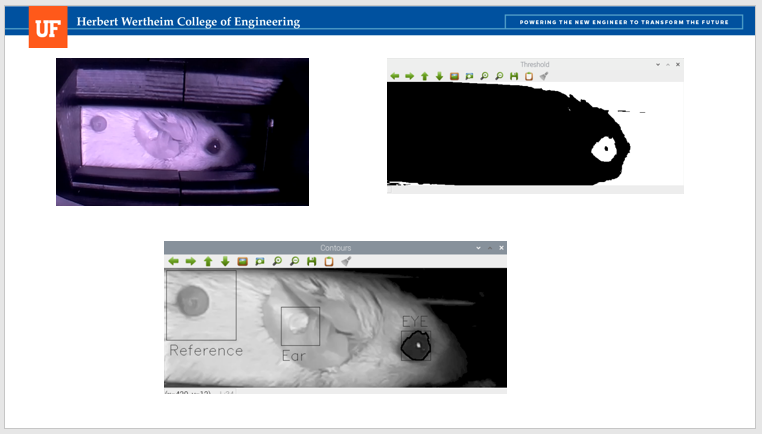

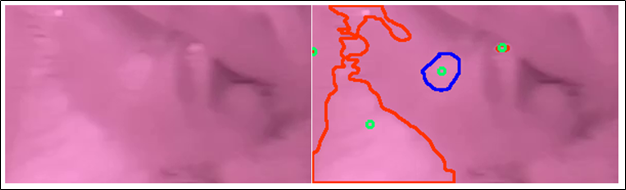

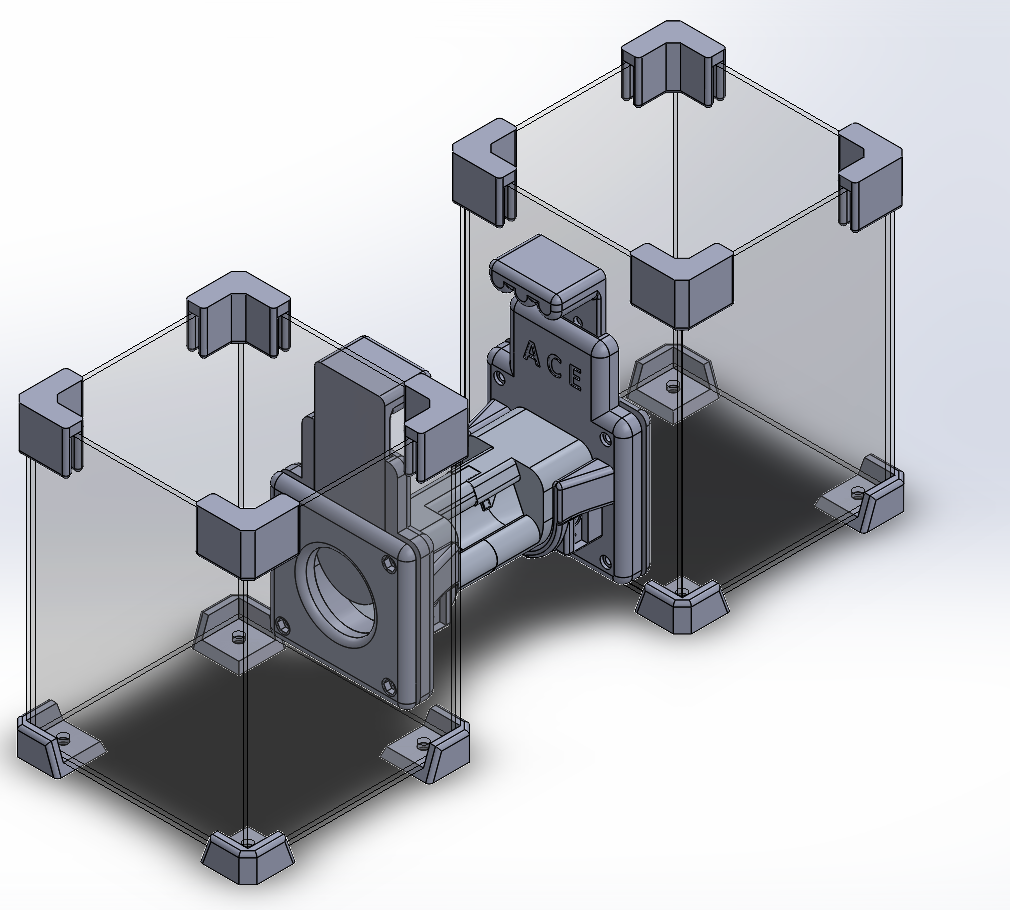

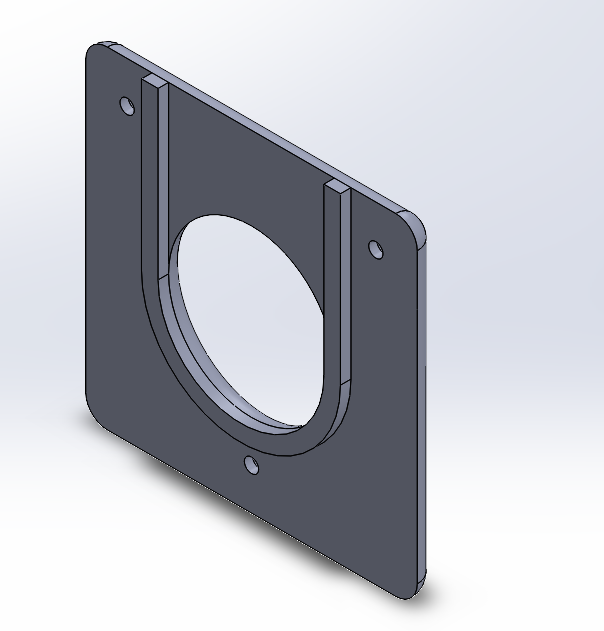

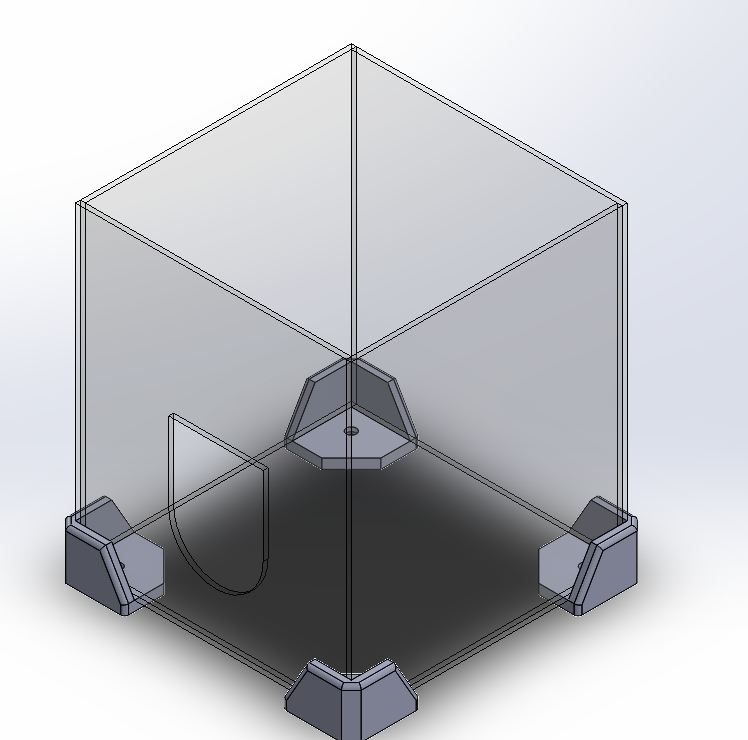

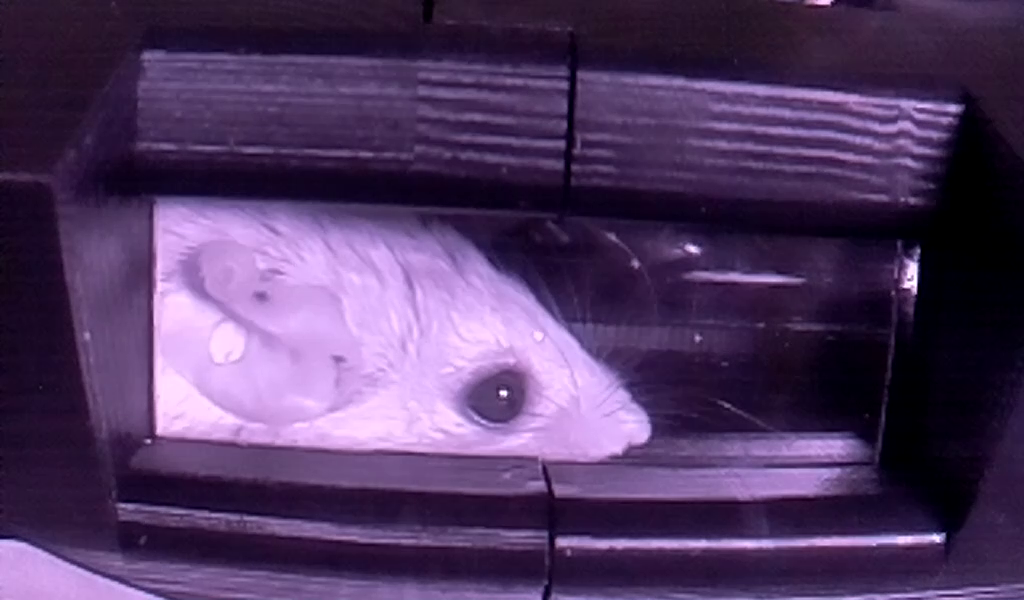

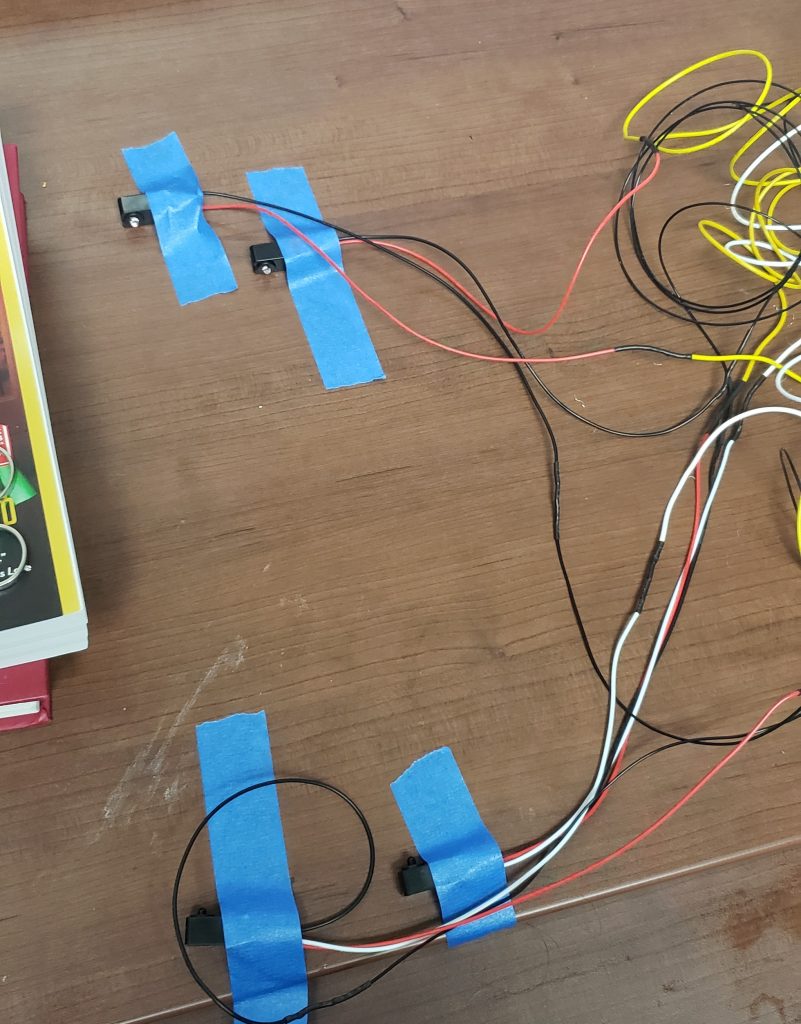

Some of the agreements made at the beginning of the project have significantly changed. Our original design focused on the mouse reaching for a treat and in that moment the mouse would be still enough to get an image of the ear. We quickly realized that this would not work due to the behavior of the mouse. Instead, we switched to a tunnel model whereas when the mouse went through a tunnel, video was captured. We also changed our processing system from real time to post processing, as this would offer a higher chance of capturing the hole. With these changes, we met all specifications except the price of the system. The goal was to have a complete system under $200. However, we quickly realized that to get a decent measurement accuracy we would have to splurge for more expensive products, and this was approved by our coach and liaison. In the end, the cost of the system was $325.75.

We would like to close out this final post by thanking everyone who helped us along the way. We would not have had the opportunity to work on this project without the IPPD team at UF. We would also like to specifically thank Lizzie Meier, IPPD’s lab manager, whom without her help and quick response, the project would not have been finished in time. We would also like to thank our coach Dr. Karl Gugel, whose guidance helped us move in the right direction. Finally, we would like to thank our project liaison Dr. Varholick who went above and beyond the traditional liaison engineer role and helped design and complete this project as much as the rest of the team. And of course, we are grateful to him for introducing us to these remarkable little creatures.