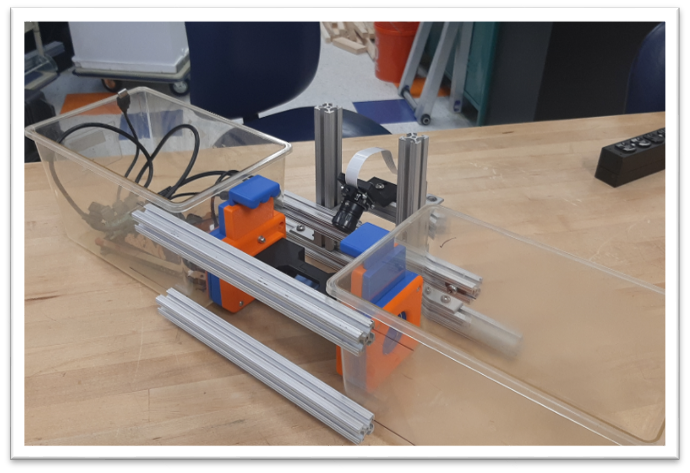

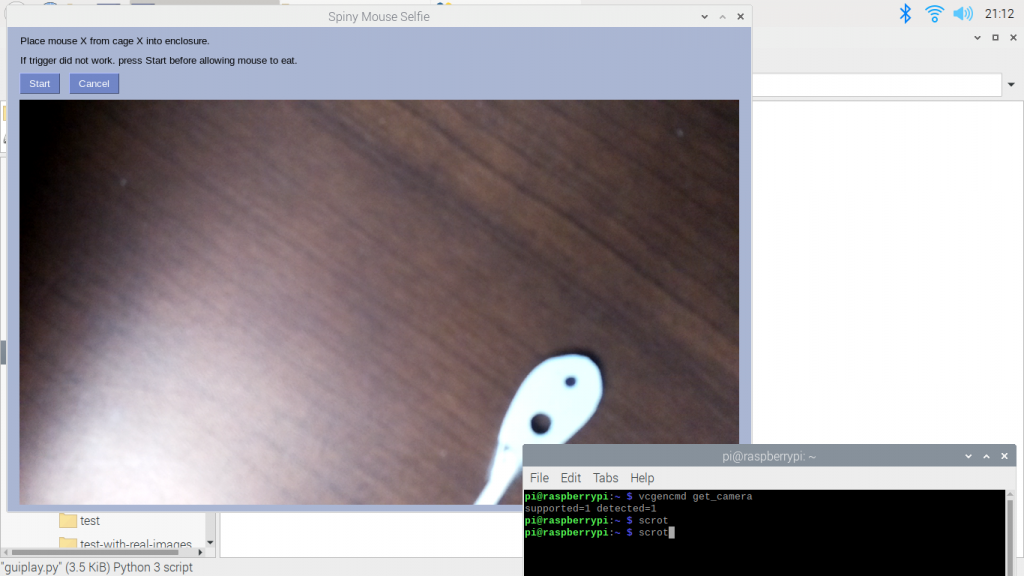

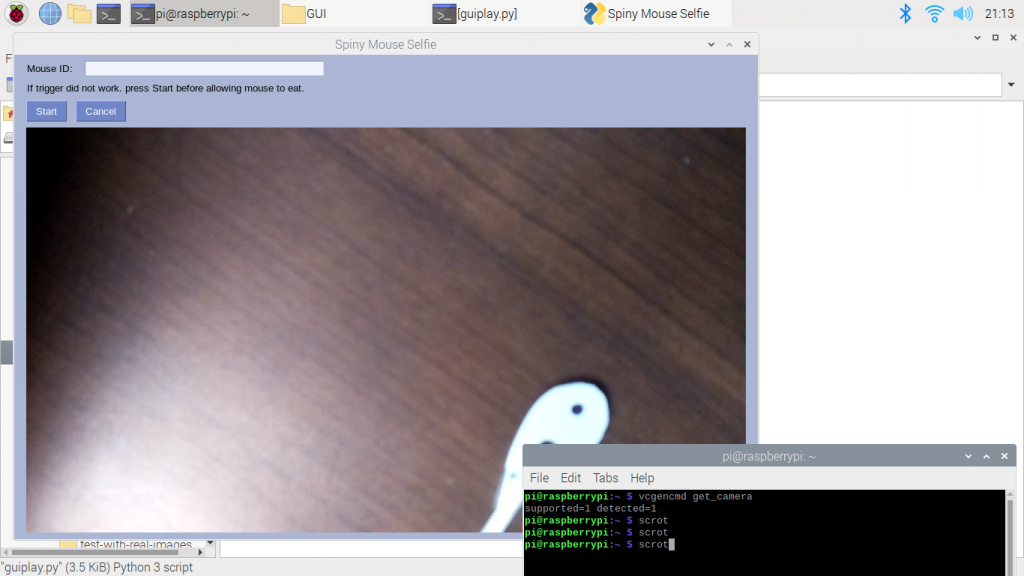

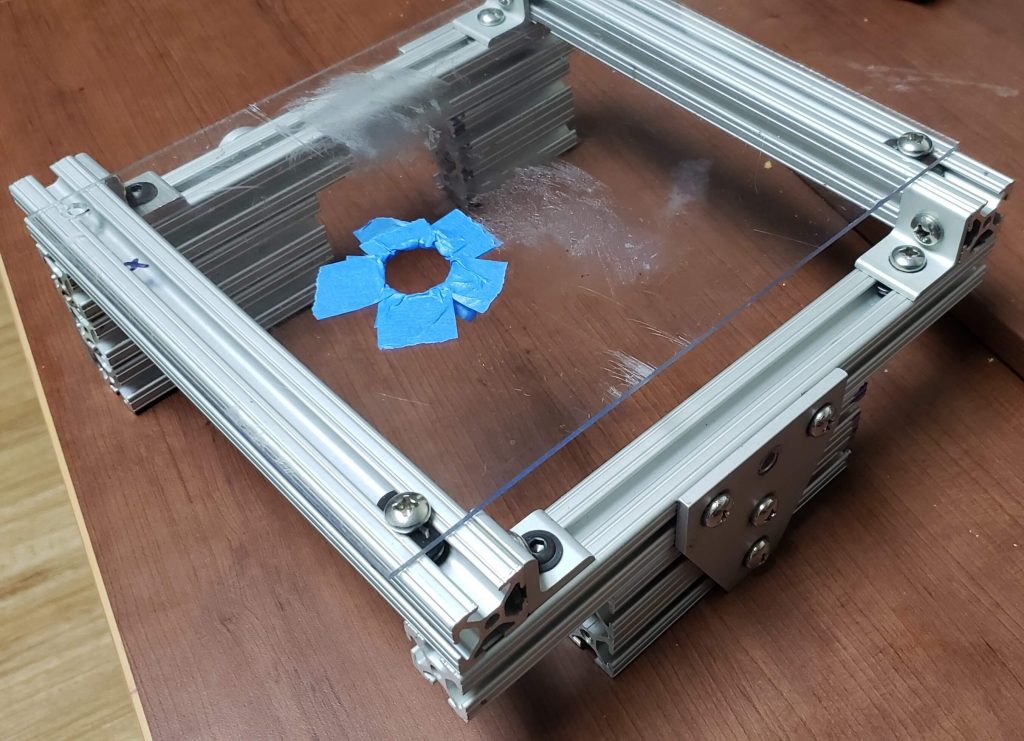

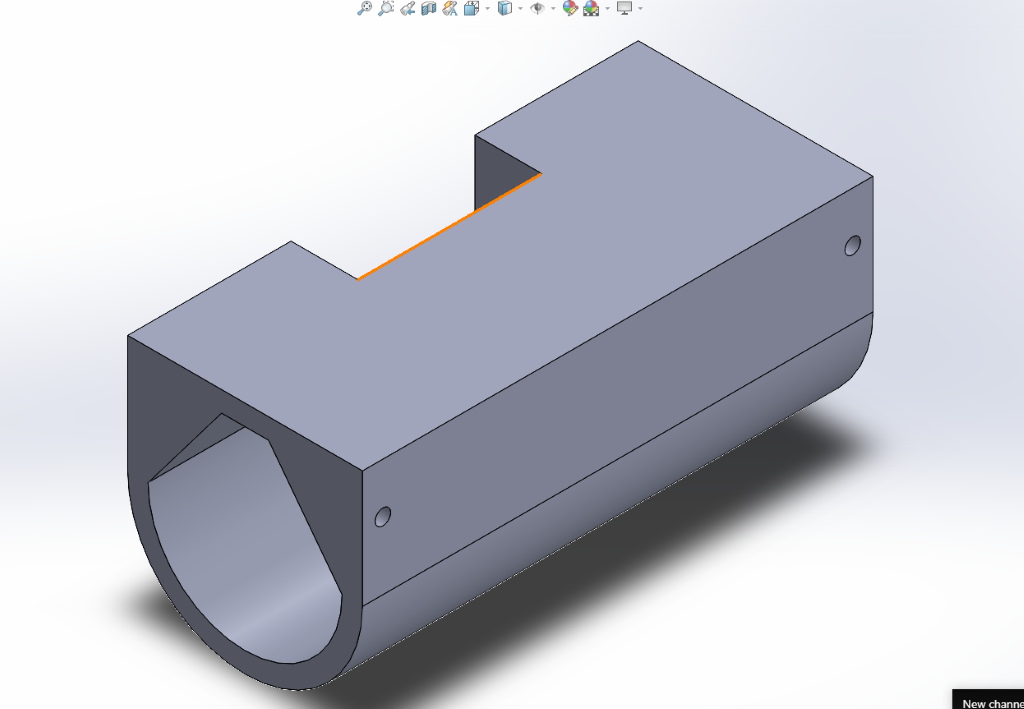

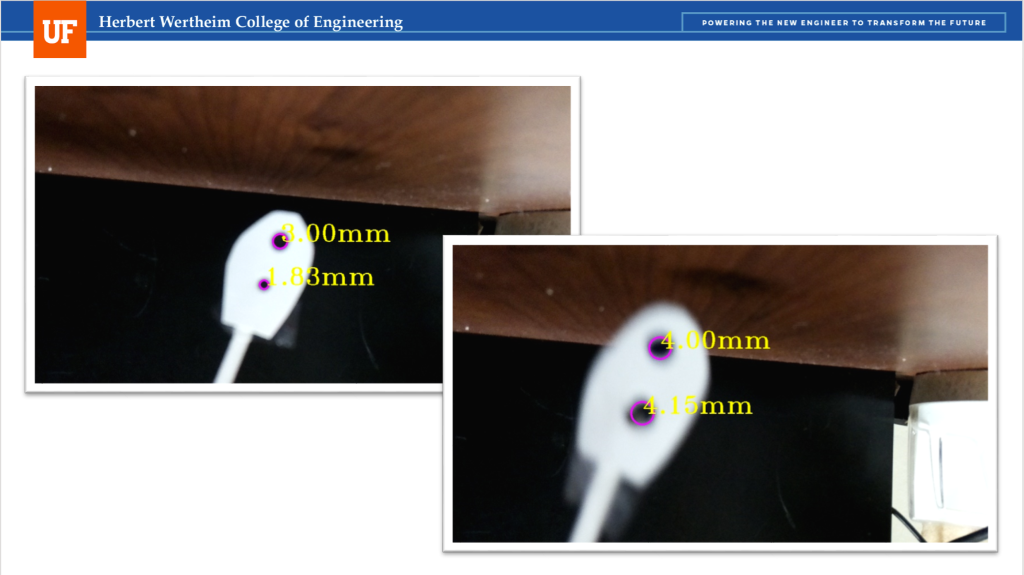

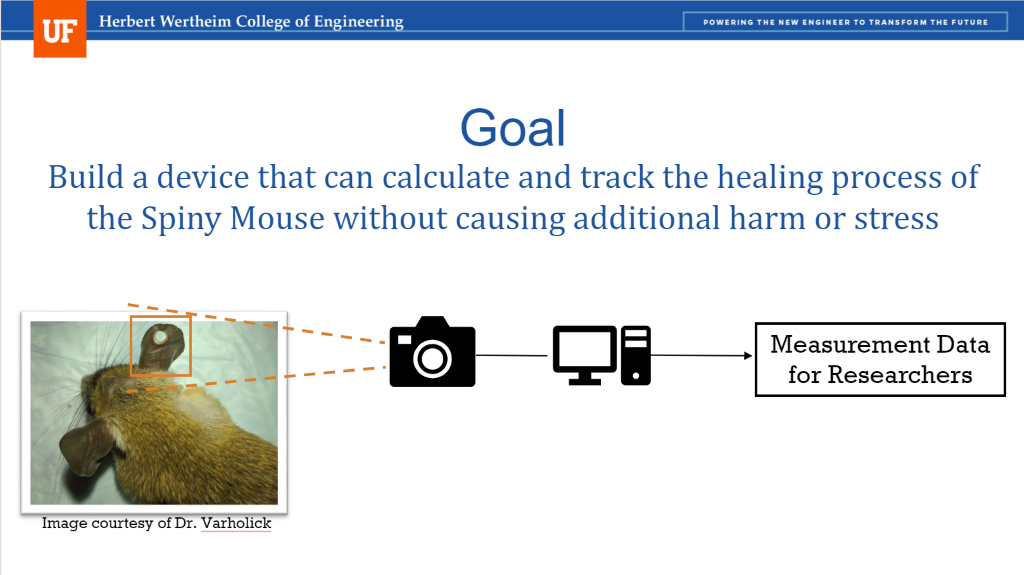

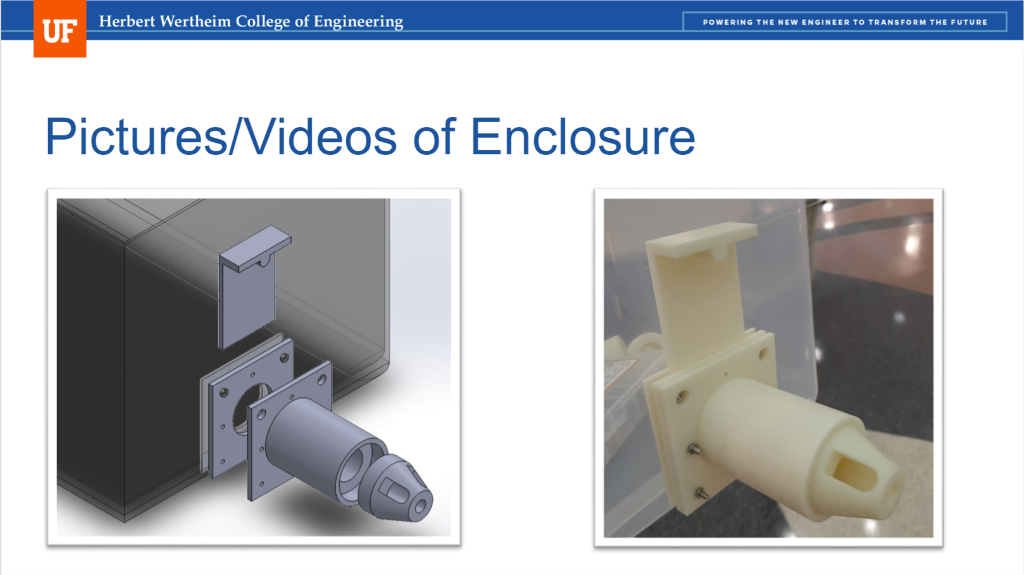

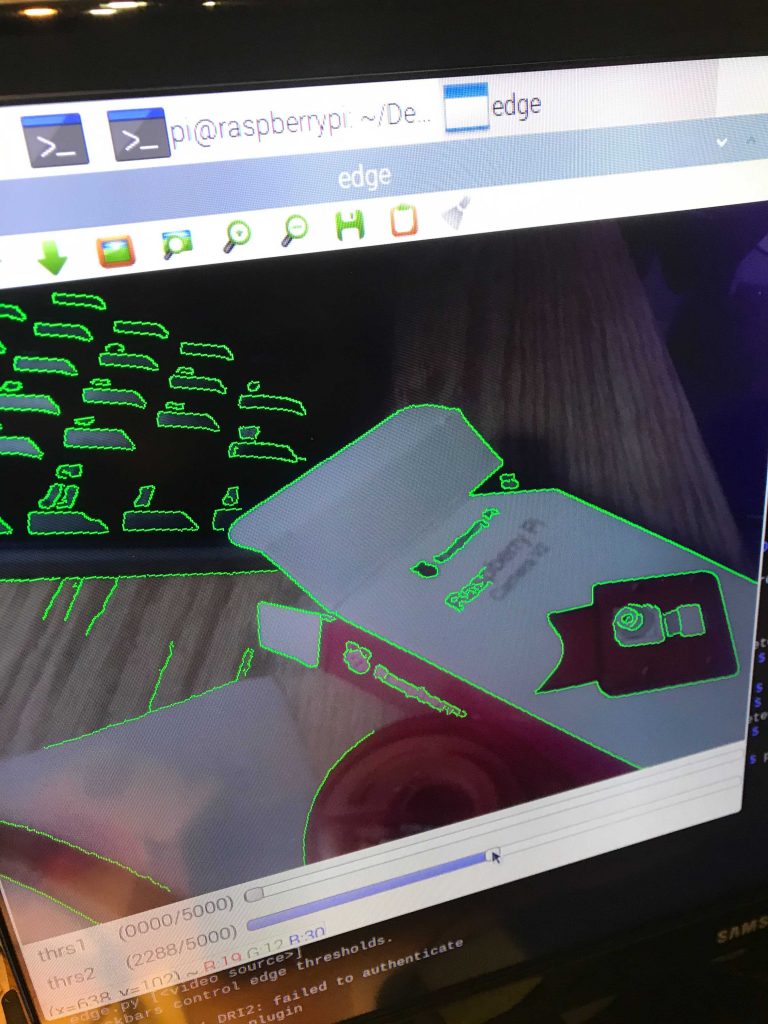

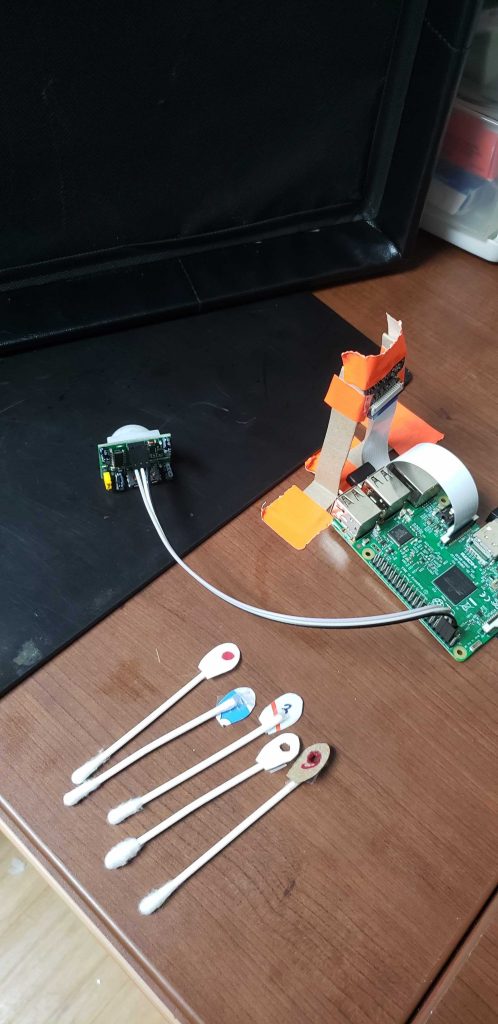

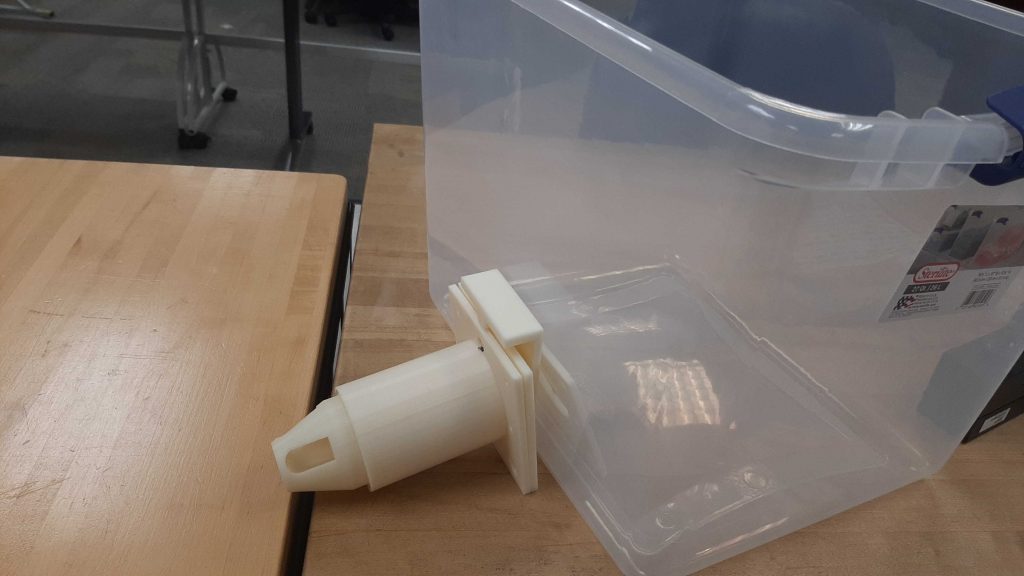

This week team Macro Mice was able to do additional tests with the live mice. This time the mice had newly punctured holes in their ears, which was extremely helpful. While we are unable to show any of these tests due to university research restrictions, we did learn some valuable information. In the tunnel the ear tends to fold over the hole, so we have begun to think about new ways to position the mouse in the tunnel. We also realized that our current camera set up will most likely not be able to see the contrast between the ear hole and mouse body, since they are so close in color. We have purchased IR cameras to help mitigate this. We will continue to aim for calculating the ear hole of the mouse to give the researcher a better estimate of the mice regeneration process. Right now, we see that the reference object has to be around the same size as the hole because if the difference between the object and reference is large, the proportion used to calculate the area does not work as well. On the physical side of the prototype, we are also taking observations made during the first full test to modify the enclosure. By testing new window angles and adding reference geometry for the camera, we hope to increase the visibility of the earholes for better data collection.

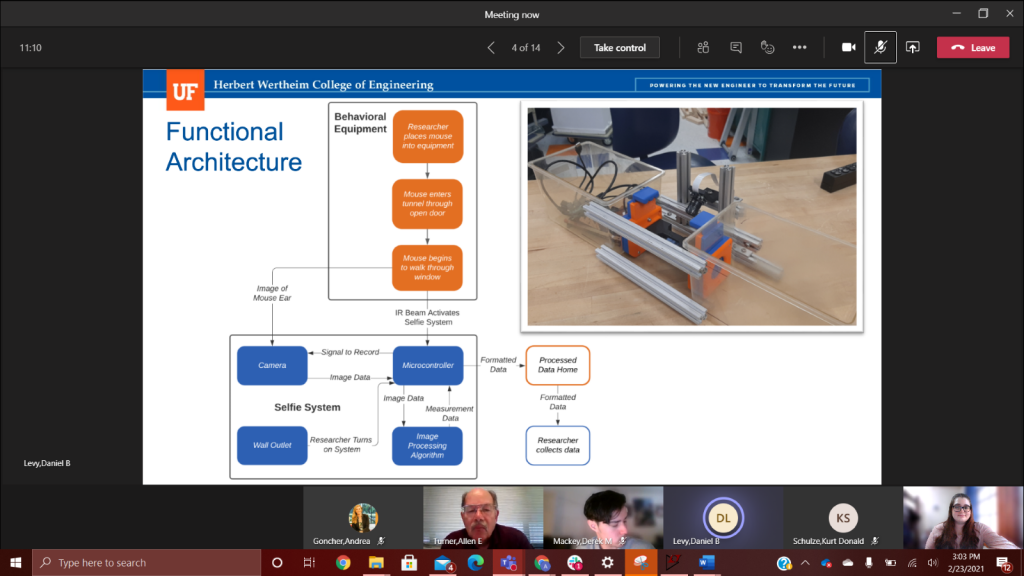

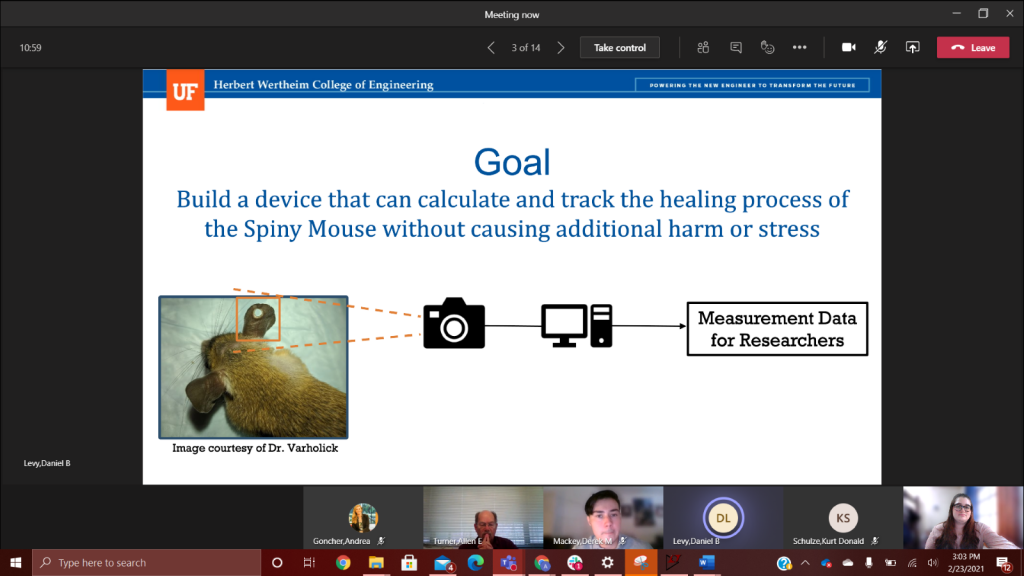

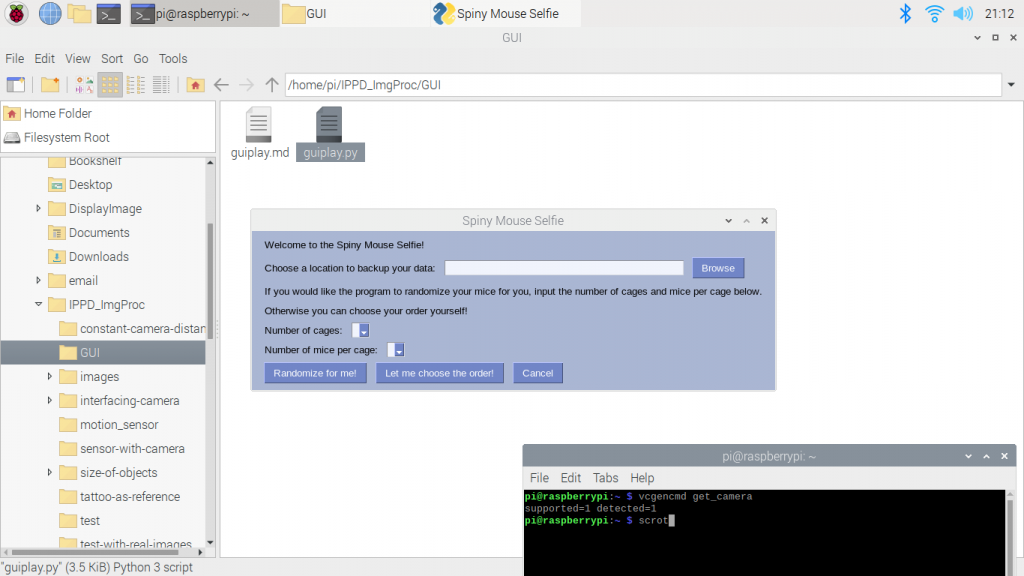

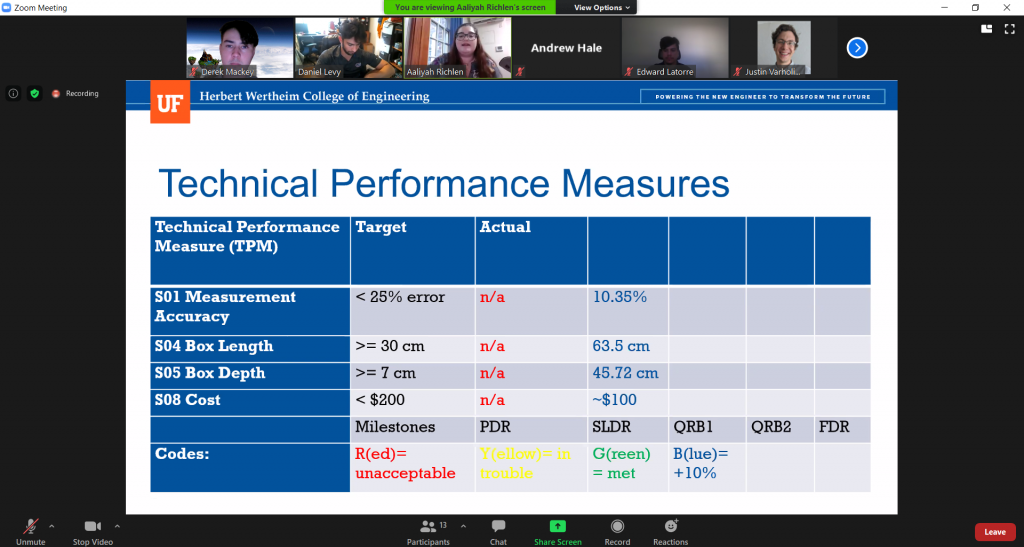

We also had our QRB2 this week. It gave us a chance to show our current prototype to multiple professors on campus and get some feedback on our setup. They mostly recognized the problems we had already identified but they gave us the useful idea that when we create our own videos with the severed ears, we should use a similar colored background. Check out some screenshots from our presentation below!