Today we will be presenting for the last time at the Reitz! Our final project hype video is seen below:

The team is glad to be graduating, but at the same time, we are also sad. These past two semesters have been an amazing time together as one of the smaller teams of only 4 members! The team has grown close over these past 8 months and will continue to stay in touch as we all go on to graduate school. Altogether, we’ve learned a lot with this project:

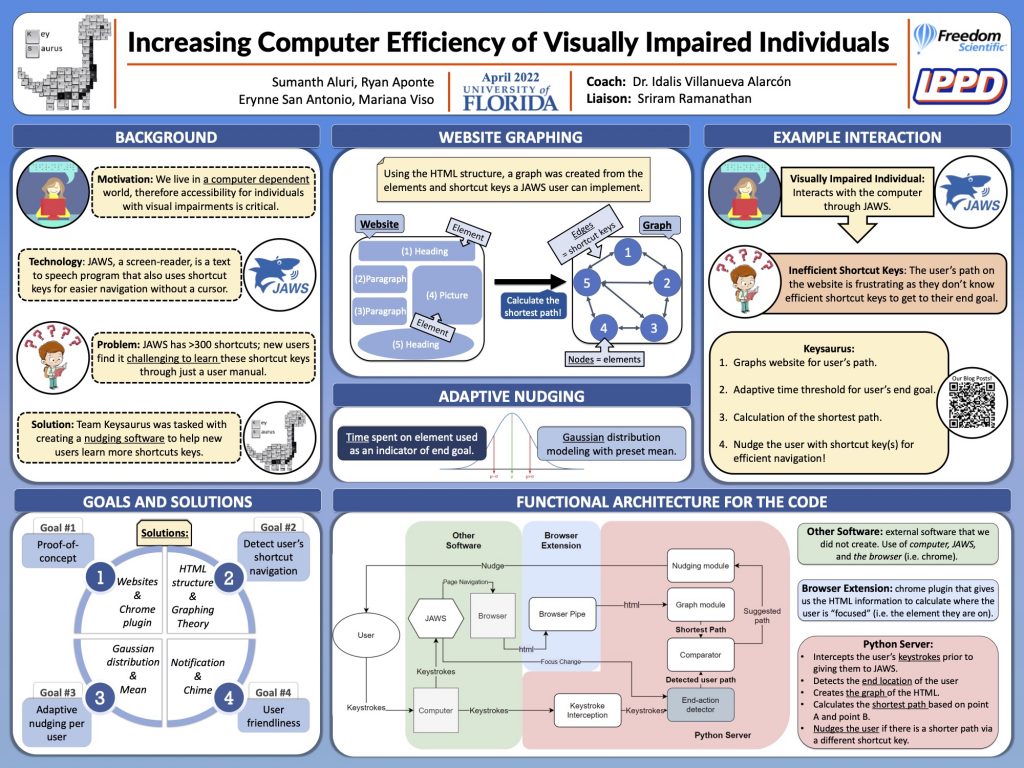

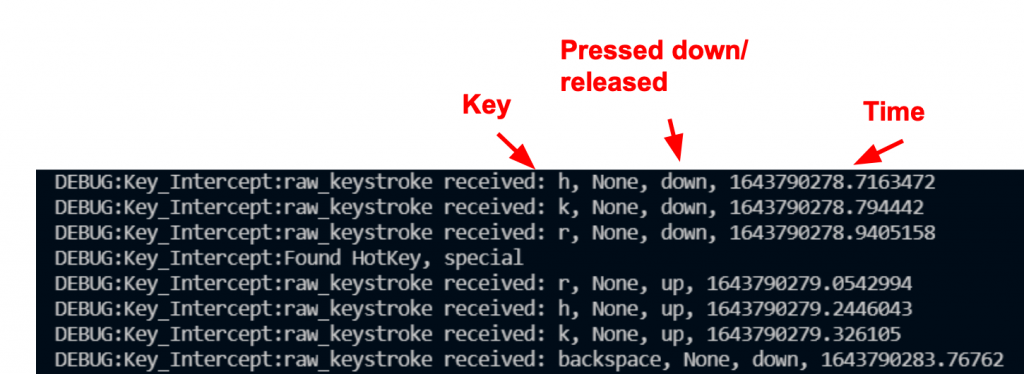

- We’ve developed a deeper understanding of coding involving websites, along with coding together on a long-term project.

- We’ve learned how to tailor the depth of content in our presentations based on the audience we’re presenting our project in front of.

- Awareness about making our presentations more accessible for those with visual impairments.

We would like to shout out our amazing liaison, Mr. Sriram Ramananthan, who helped guide the team through the worst and best of our project’s ups and downs. Without our liaison, our presentations would not have been as good or as tailored to visually impaired individuals. Furthermore, we would also like to shout out our wonderful coach, Dr. Idalis Villanueva, who has been phenomenal about arranging meetings for University professors or with the DRC. Without our coach, we would not have had such a flushed-out project or completed user testing. All in all, this team could not have done as much without our coach and liaison- we appreciate you both!