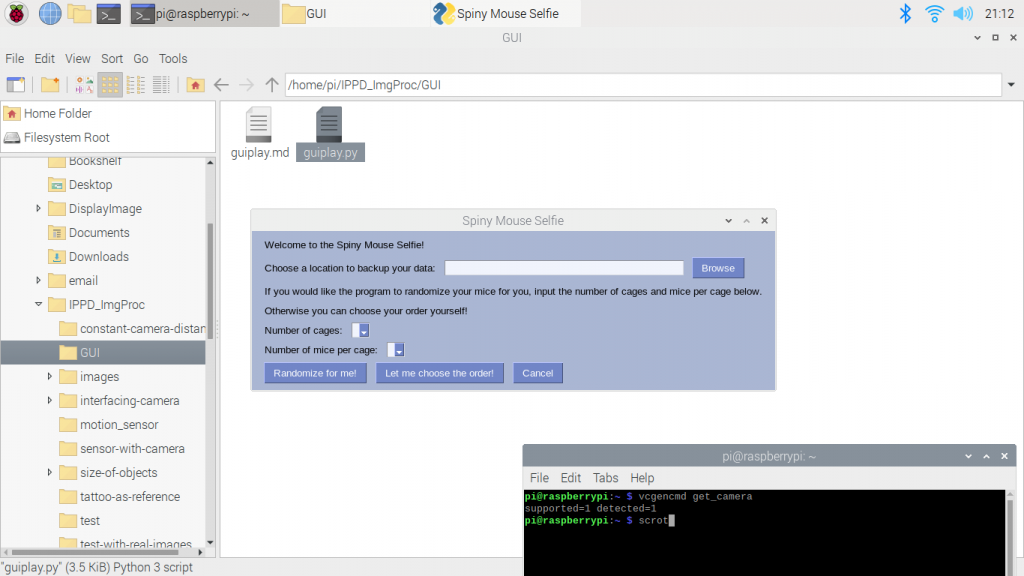

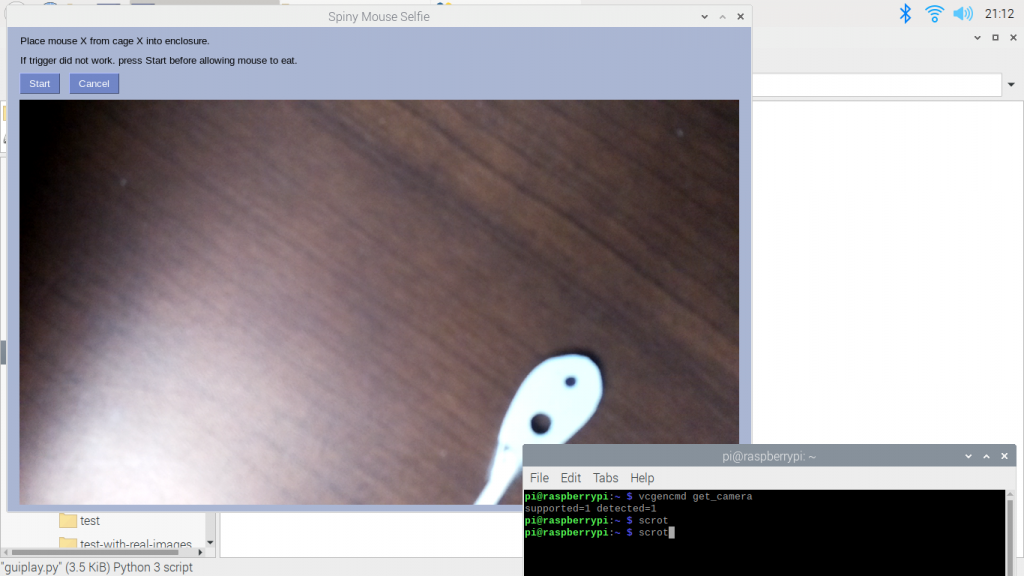

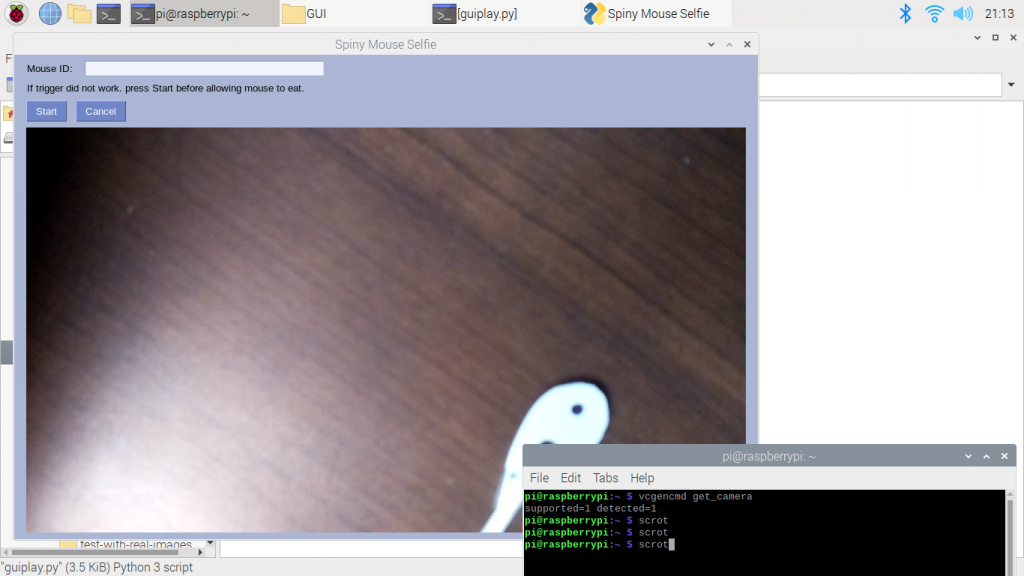

One element of the project that we spent a lot of time on was our GUI for our program, so it makes it easier for researchers to use. The program starts off by asking where the researcher would like the data saved and how many mice they have. Then, the user can pick for the program to randomize the order of the mice for them, or they can choose their own order. A new window then opens either asking for a specific mouse to be run or a textbox that where the user can input the mouse. The user can then click start to start the camera or wait for the sensor to start the sensor. The video feed is then shown in the GUI. Check out some screenshots below!

Using the pixels per metric ratio, we were hoping to use OpenCV to be able to recognize the hole in the mouse ears and use some thresholding so we could count the number of pixels that comprise the ear cavity. Getting the program to only recognize the hole has been more difficult than expected and will require a lot more work.

We’ve also made progress on the behavioral unit this week! After multiple iterations of testing, we finally have a completed behavioral unit that has been delivered to our liaison for further testing. Going forward, we are planning to combine the Selfie System with the Behavioral Unit in order to begin our first tests of the full prototype. Check out some the images of the behavioral unit below!