We are nearing the end of initial development for the complete app. The pieces are starting to be finalized and many of the components are starting to get integrated. The image processing algorithm is complete functionality-wise, but requires a few minor bug fixes. The algorithm now identifies weather events from the tile images, finds their centers, distances, and angle relative to the user, calculates their rainfall/snowfall rate, and packages this up in a JSON and sends it to the server. The server is now able to send this data to the app, and we are now working on turning this information into language for the user.

The radar screen and weather alerts screen are coming together as well. Like I said earlier, the radar screen is currently getting the functionality of translating the JSON data into English text. Furthermore, it is going to display the real time radar image for low-vision or sighted users who would like it. The weather alerts screen is on the last few steps. The app is already able to fetch weather alerts from the API, so now it is just a matter of improving the UI and formatting.

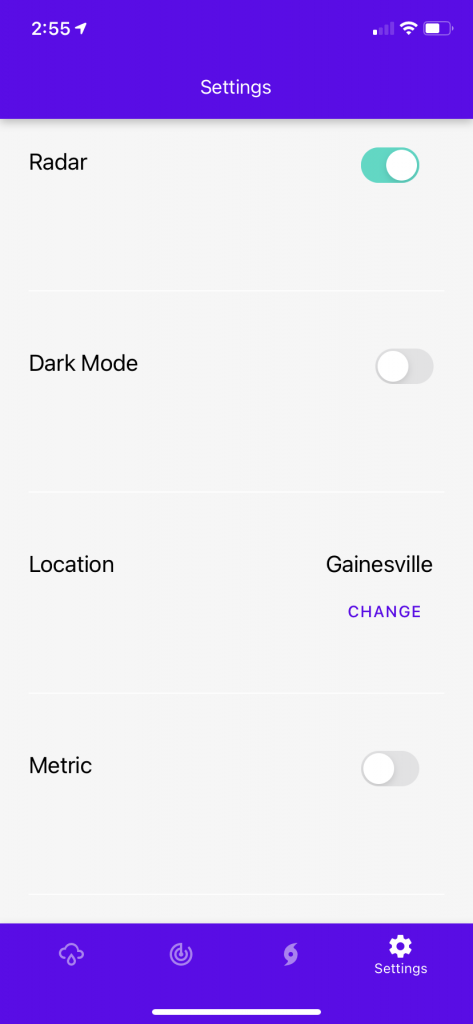

The settings screen is nearing completion. Most of the desired app customizations are in place, and we are not fixing the bugs. Some of these settings can only be tested when some of the other screens are completed, so this task is currently blocked.

On Tuesday of next week we are presenting our progress to Freedom Scientific, and we will be demoing our app. We are all really excited and proud of the work we’ve done. We can’t wait to impress the company with how our application has come, and we are eager to get it into the hands of members of the blind and low-vision community to get their impressions.