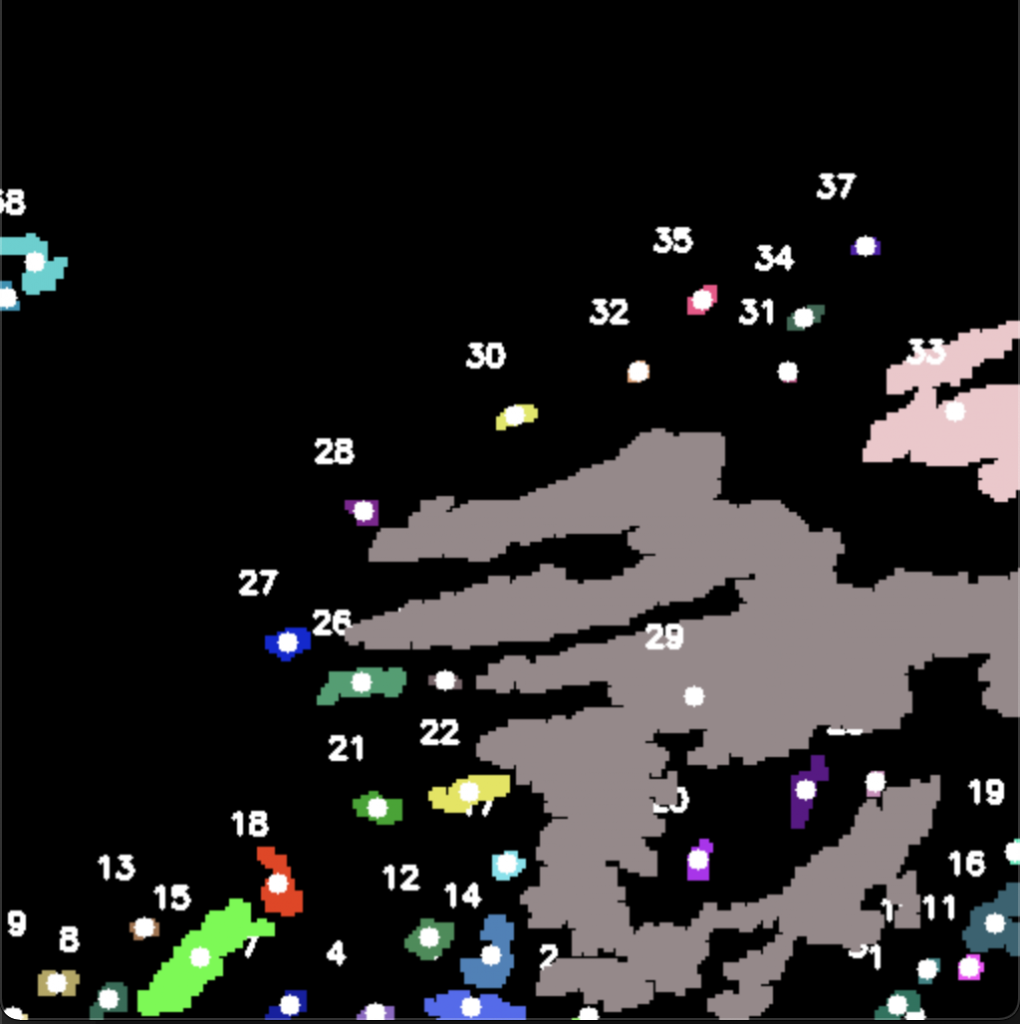

We are nearing the end of the image processing algorithm! If you recall, we’ve been able to fetch weather radar images from our API and perform basic edge detection on them. Since then, we’ve been able to fix some of the bugs we were having, and now our algorithm is able to detect “blobs” on the screen (aka rain or snow clouds), and it is able to find the “center” of these blobs. These are major steps in finishing this algorithm, since now we are able to programmatically find the points of interest in the picture. We’ve also been able to calculate the intensity of each of these events, and whether or not they are rain or snow clouds. From here, we are going to package the most critical information into a data structure to send back to the application, for the last bit of processing. This processing involves turning the information into langauge for the user to listen to, as well as calculate the distances of these events relative to the user’s specified location

Progress has been continuing on the other aspects of the application as well. We’ve continued to develop and apply more unit tests to make sure everything is working as intended. The settings screen is progressing with our vision of the desired customizable features. The server is fully set up and running, and only requires some further tweaking to enable it to run the image processing algorithm and send the information back to the application. Once these pieces are put in place, the app will be in an alpha state ready for user feedback. We will continue to tweak the bugs, and the UI design.