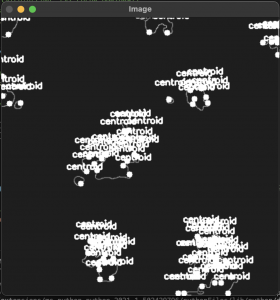

The team has been actively fleshing out the radar data interpretation algorithm. We’ve made some decent strides in processing radar images. For one, we’ve been able to eliminate noise and only study the key weather events on the radar image. We’ve also been able to outline and find points that lie inside of these events. These images are being fetched from the API, so with a little more sophistication then we’ll be able to test out how it works in real-time. Our next goals are to be able programmatically identify what each weather event is and what it is going to do. We’ve already come up with ideas for how we’re going to do this, so it’s just a matter of bringing it to life.

Besides the image processing, we’ve also been making progress in fleshing out the app itself. We’re developing the settings and weather alerts screen in parallel. We’ve also begun developing unit tests to ensure that these features are working as intended. Once these are completed, we will run integration tests on the entire app, and by that point the image processing will be nearing completion.