Welcome back!

At the end of last semester, Pythax delivered their complete presentation of their system-level design to a panel of liaison engineers. The feedback received from that presentation was positive and valuable, and the team was able to finally move out of the planning phase.

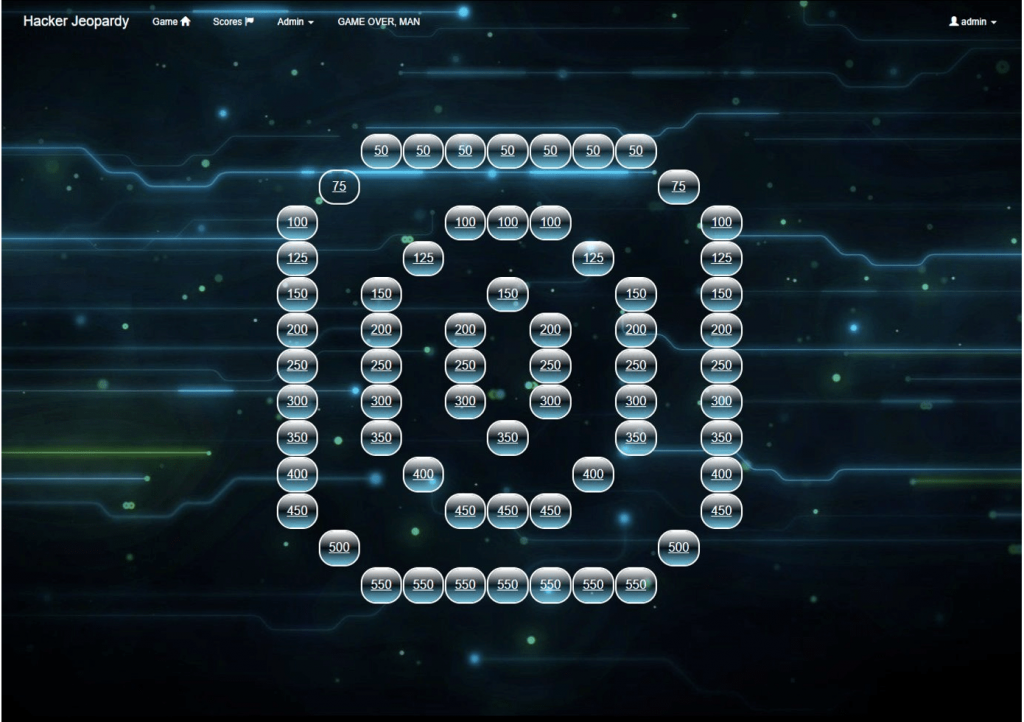

Now that the winter break is over, Pythax is ready to roll with some new sprints! The first of these includes implementing features for disabling questions on the game board and developing the backend for question hints. New hints for old challenges are also being generated at this step, and the proposed UI changes from the previous semester are beginning to be implemented.

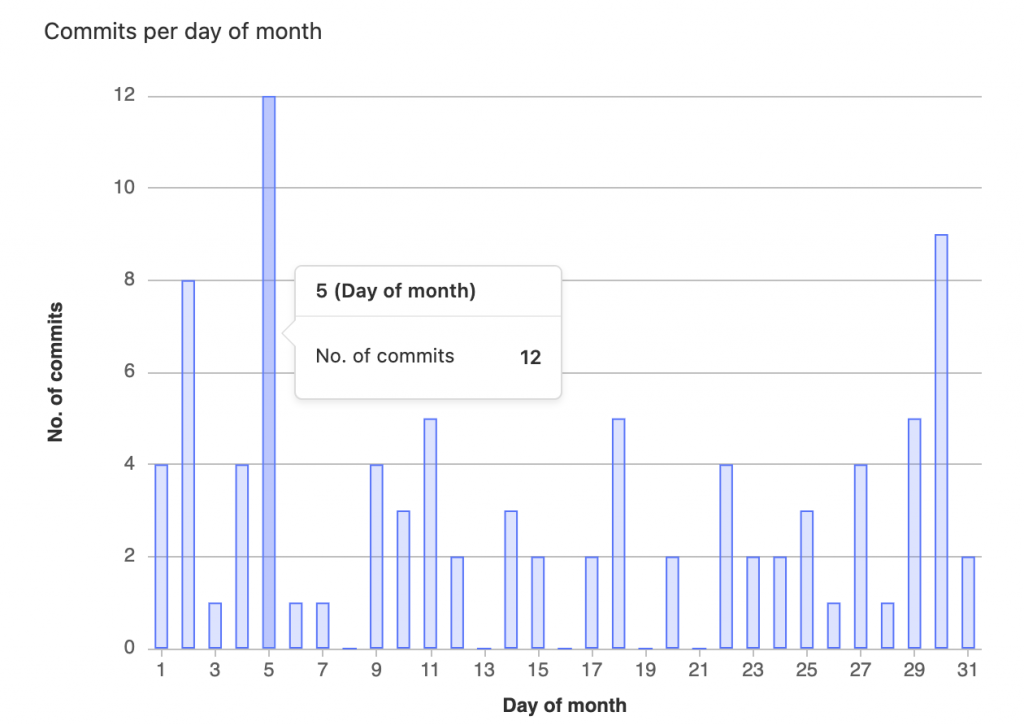

The GitLab repository is starting to see activity with new branches, commits, merges, and all the other fun stuff that comes along with version control. So far the team is mostly on target for meeting their deadlines, which bodes well for the first Quality Review Board (QRB1) of the semester.

We’re looking forward to a productive and eventful semester. Stay tuned for more updates on our progress! From us to you, Happy New Year, and go Gators!