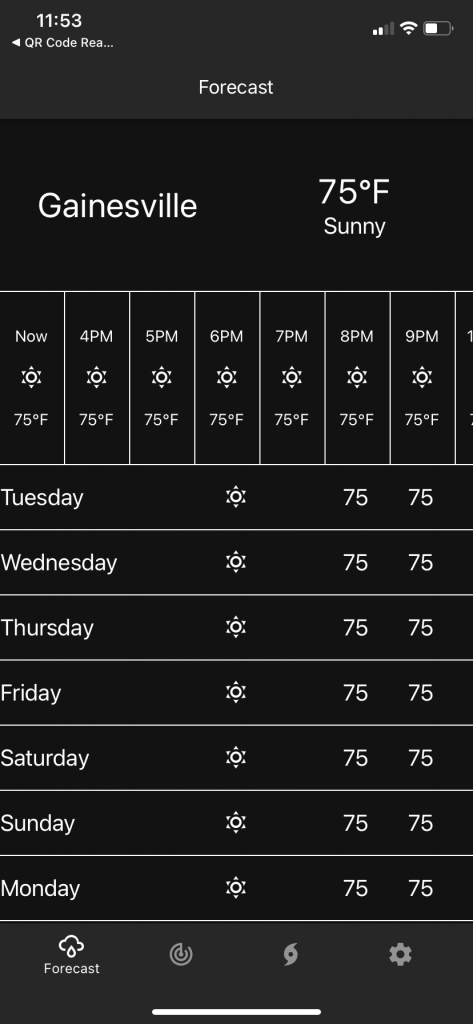

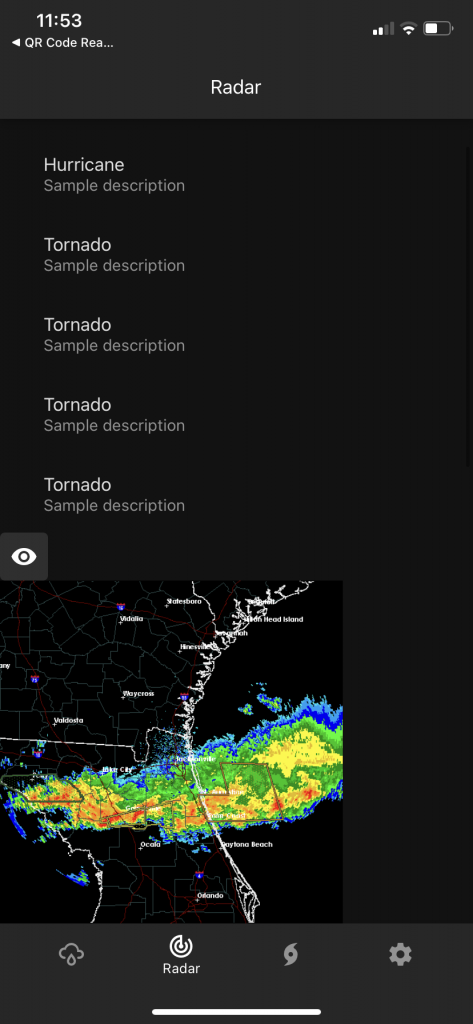

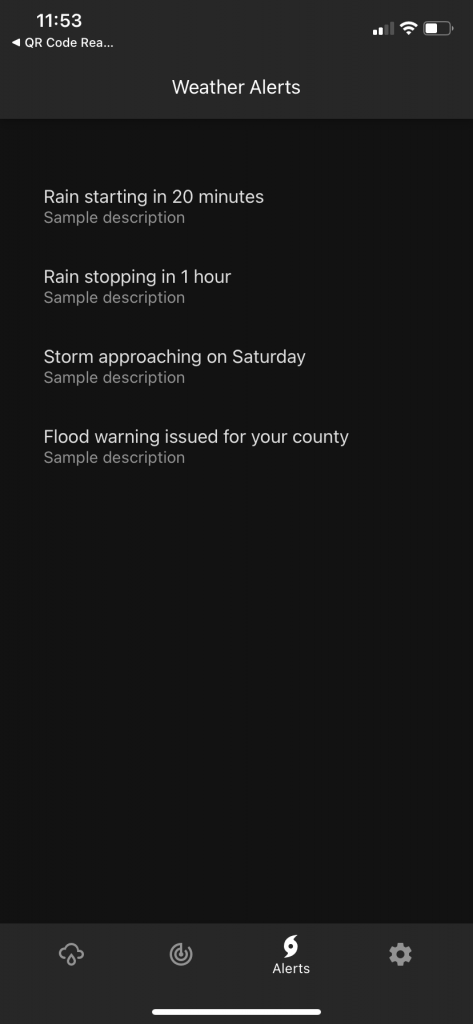

This week the team presented their initial prototype to a group of 6 coaches. Our protoype consisted of 3 screens that can be navigated to through a navigation bar at the bottom of the screen. Our first screen was a forecast screen that showed the current, hourly, and daily forecast. Our second screen showed a radar image and displayed text describing what was on the image. Our last screen showed some severe weather alerts for the area. All of the information in our prototype was all dummy data, and none of this was dynamic or programmatic. We manually entered all of this information for the sake of demonstration.

Each of the componentes in our app had alternative text that would be read out when Voiceover or Talkback were enabled on the device (these are the screen reader and accesibility functions for iOS and Android respectively). This allowed us to show how accessible our app would be to those who are blind or have limited-vision capabilities. We also structured each of the components on the app such that if you traversed each component linearly, starting from the top, then you would start by getting the most relevant and important information first, and it gradually decreases in relevancy. This is to ensure that those with the accessibility features enabled would not have to search the screen for the infromation they want, rather they can just start from the top and move forward.

Our app also adapts to the display settings of the device, so it changes depending on if the device is set to dark or light mode. The following images are screenshots from our app:

We were really excited to show off our prototype, and we got some really useful feedback about how to improve it. We are eager to keep working on this and bringing the vision to life. Our next steps are to continue to add functionality to the app and bring in some of the weather API data.